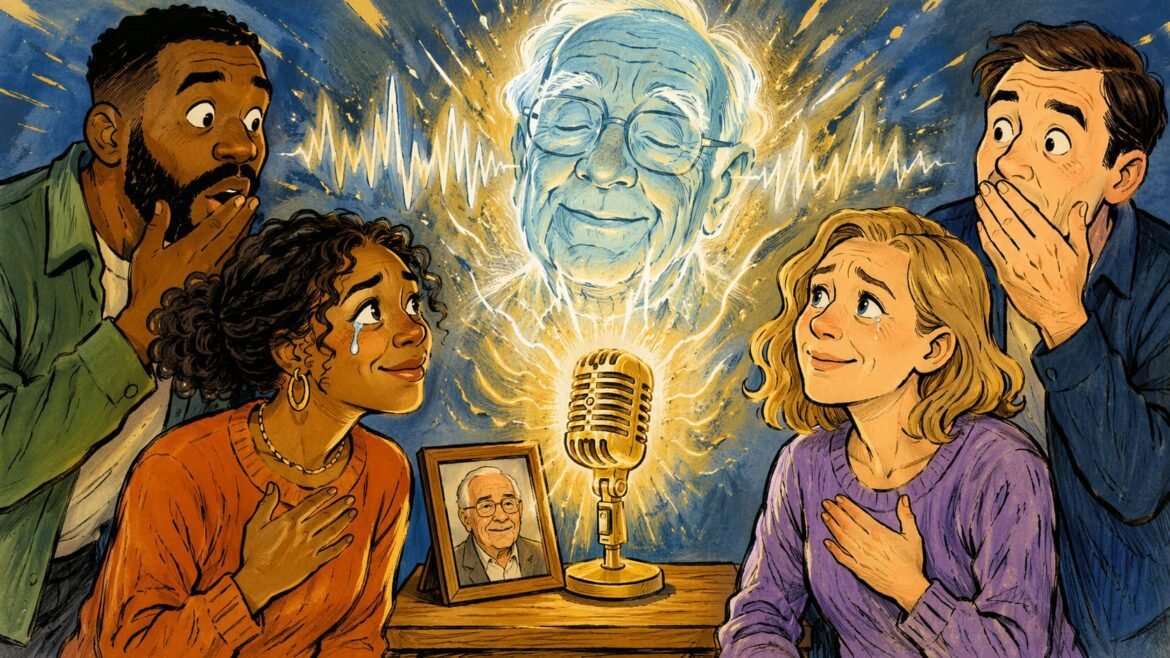

The voice arrived before the room was ready for it. Not as a ghost, not as a memory, but as something sharper, cleaner, almost rehearsed. A daughter pressed play and heard her late father speak again, the same cadence, the same pauses between thoughts, the same quiet authority that once filled a dinner table. It felt less like technology and more like trespassing. Somewhere between grief and curiosity, a new industry has emerged, one that turns absence into product and memory into interface. Artificial intelligence voice cloning, deepfake audio, digital resurrection, these are no longer fringe experiments. They are quietly becoming part of everyday life, slipping into voicemails, documentaries, podcasts, even personal rituals. Death used to be a boundary. Now it feels more like a glitch.

The shift is not just technical. It is cultural, almost philosophical. For centuries, silence was the final language of death. The last word spoken held weight because it was final. Now finality is dissolving. When AI can recreate voices with unsettling accuracy, the idea of a “last conversation” begins to feel negotiable. The human mind, already fragile in the face of loss, now has an escape hatch. Grief becomes editable. Closure becomes optional. And something strange happens when closure disappears. People do not move on. They linger, looping conversations that were never meant to continue.

Consider how quickly this has moved from lab curiosity to emotional infrastructure. Filmmakers have used AI to recreate voices of actors long gone, raising debates about consent and artistic integrity. Families have experimented with voice models trained on archived recordings, trying to preserve presence beyond death. Tech companies speak about “legacy avatars” and “digital continuity” with the calm tone of product managers, as if they are discussing cloud storage rather than the boundaries of mortality. It sounds efficient. It feels unsettling. The language of innovation rarely admits when it is tampering with something sacred.

A small studio in London worked on a documentary where a deceased journalist’s voice was recreated using archived audio. The result was praised for its emotional impact, yet critics quietly asked a harder question. Who owns a voice after death? The estate? The audience? The algorithm trained on fragments of a life? The filmmaker described the experience as “giving the story back its narrator.” Another observer called it something else entirely. A simulation that risks confusing memory with performance. Both statements can be true at the same time, and that tension is where the culture now lives.

You can feel the pull of it even if you resist it. There is something deeply human about wanting one more conversation, one more piece of advice, one more laugh that sounds exactly like it used to. That desire is not new. What is new is the tool that responds to it. AI does not just preserve memory. It animates it. It creates the illusion of presence, and the illusion is convincing enough to blur emotional boundaries. The brain does not always care that something is synthetic. It reacts to familiarity. It reacts to tone. It reacts to voice.

A product designer named Kofi once built a private voice model of his grandmother using years of recorded phone calls. He said it helped him through a difficult year. He could “hear her wisdom again,” as he put it. Months later, he admitted something more complicated. The comfort began to feel like dependence. He found himself asking the model questions he already knew the answers to, just to hear her voice respond. What started as preservation slowly turned into a loop. The tool did not replace grief. It stretched it.

This is where the deeper tension sits. Technology promises continuity, but human meaning often depends on limits. Scarcity gives moments weight. Endings give stories shape. When everything can be extended, replayed, or reconstructed, meaning starts to thin out. The risk is not that AI will replace memory. The risk is that it will overwrite it with something smoother, more convenient, and less honest. Real memories are messy. They fade, distort, and sometimes contradict themselves. That imperfection is part of what makes them human. A generated voice, no matter how accurate, carries a different texture. It is too precise. Too obedient.

There is also a quiet economic layer beneath all this. Voices are becoming assets. Likeness is becoming intellectual property. Entire business models are forming around posthumous presence. The entertainment industry has already begun to explore this space, with estates licensing digital recreations of performers. What happens when ordinary people enter that same system? When a voice becomes something that can be bought, sold, or replicated at scale, identity itself starts to behave like a commodity. It raises questions that do not have easy answers. Not about capability, but about permission.

Somewhere in a dim editing room, a waveform glows on a screen. It looks like any other piece of audio, peaks and valleys arranged in familiar patterns. Yet behind those shapes sits something heavier. A human life, reduced to data points that can be rearranged, replayed, extended. The engineer adjusts a parameter, presses play, and the voice returns again, steady, convincing, almost alive. There is no ceremony in that moment, no acknowledgement of the boundary being crossed. Just a quiet continuation of a process that no longer feels unusual.

And maybe that is the most unsettling part. Not the technology itself, but how quickly it begins to feel normal. The first time a voice returns, it feels like a miracle. The tenth time, it feels like a feature. The hundredth time, it becomes invisible. Culture does not collapse all at once. It shifts in small, almost polite increments until something fundamental has changed and no one can point to the exact moment it happened. Silence used to mean something. Now it competes with playback.

Somewhere, a voice speaks again where it should not, and the room listens as if nothing has changed, leaving only one quiet question hanging in the air, waiting for someone brave enough to answer it: when memory can talk back, will anyone still learn how to let go?